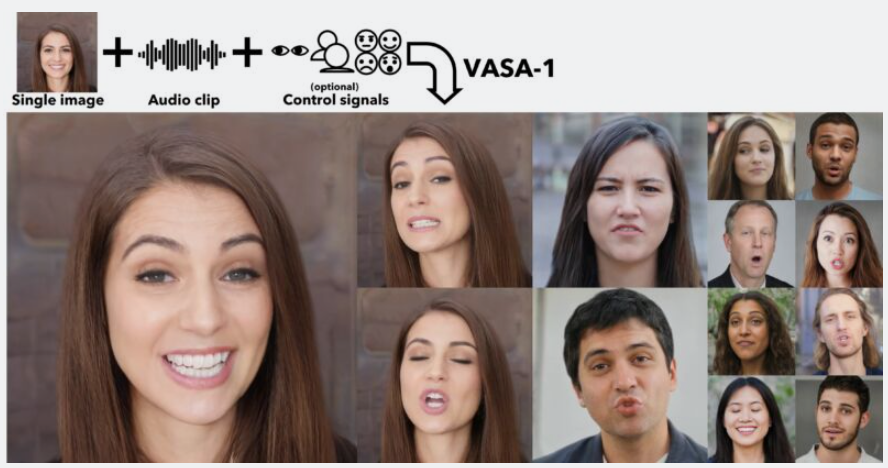

Image credit: Microsoft

Microsoft has introduced groundbreaking AI technology known as VASA-1, capable of creating lifelike videos from a single image and audio sample. The VASA-1 framework utilizes machine learning to animate still images, synchronizing them with audio to produce convincing talking avatars. This innovation has garnered significant attention from technology enthusiasts and industry experts alike.

Imagine this: a cherished photo of your grandma, suddenly coming alive to tell you a bedtime story with her own voice, her face crinkling with familiar warmth as she speaks. It’s enough to bring tears to your eyes, a heartwarming experience made possible by Microsoft’s groundbreaking new tool, VASA-1.

But wait, a knot forms in your stomach. What if someone used this technology to make a video of your grandma saying something she never did? A chilling possibility, right? This is the double-edged sword of VASA-1: a tool with the potential to create beautiful, sentimental experiences, but also one that could be misused to spread lies and manipulate the truth.

VASA-1 utilizes cutting-edge machine learning to transform static images into lifelike videos. It analyzes a photo alongside an audio clip, then generates a video where the person in the image appears to speak the words in the audio. Imagine a world where virtual assistants can truly connect with us, their expressions mirroring our emotions. VASA-1 could even be used to create tools that assist people with communication difficulties.

However, with this power comes a dark side. Deepfakes – fabricated videos designed to deceive – are a growing concern. Malicious actors could use VASA-1 to create fake news stories or smear campaigns, eroding trust in everything we see online. The potential impact on public discourse and media integrity is deeply concerning.

Microsoft, to their credit, understands this responsibility. Recognizing the potential for misuse, they’ve chosen to hold back on a public release of VASA-1. This commitment to ethical development sets a crucial example for the tech industry.

The future of VASA-1 and similar technologies remains uncertain. Collaboration between developers, policymakers, and the public is crucial. We need robust safeguards to prevent misuse, clear regulations to govern its use, and user privacy and consent protections. Additionally, transparency is key – when VASA-1 generated content is used, it should be clearly disclosed.

Microsoft’s VASA-1 represents a significant milestone in the field of AI. It pushes the boundaries of what’s possible and offers exciting opportunities. VASA-1 has the potential to revolutionize the way we communicate and experience digital content. But the potential for misuse cannot be ignored.

However, its potential for misuse necessitates careful consideration and collaboration. By prioritizing responsible development and ethical considerations, we can ensure that AI technologies like VASA-1 are used for good and do not become a tool for manipulation and deceit. This is the key to ensuring that VASA-1 becomes a force for positive change in the world.

[Credit: Information sourced from Ars Technica]